You've probably noticed that AI has started showing up differently lately. Not just as a chatbot that answers questions, but as something that actually does things. Books meetings. Writes and runs code. Pulls data from multiple places and hands you the finished output.

That's what people mean when they say agentic AI. It's a real shift in how these systems work — and it's worth understanding properly, without the noise.

Agentic AI is an AI system that can work toward a goal across multiple steps, on its own, without someone guiding every move.

Regular AI tools respond to one input at a time. You ask, it answers. That's it. An agentic system is different — you give it a goal, and it figures out what steps to take, takes them, checks the result, and keeps going until the job is done.

The word comes from 'agency' — the ability to act independently. That's the simplest way to think about it.

A chatbot is reactive. You type something, it replies. The next message starts fresh — there's no ongoing task, no independent action, no memory of what it was working on.

An agentic AI is different in three concrete ways:

It can use tools. It doesn't just generate text. It can search the web, read files, call APIs, run code, send emails — actual actions, not just words.

It works in sequences. Step 1 leads to step 2 leads to step 3. It's not answering a question — it's running a process.

It makes mid-task decisions. If something doesn't work at step 2, it adjusts and tries again. It doesn't stop and ask you what to do next.

A simple example: a regular chatbot can tell you how to write a database query. An agentic AI can connect to the database, write the query, run it, check the output, and hand you back a formatted report.

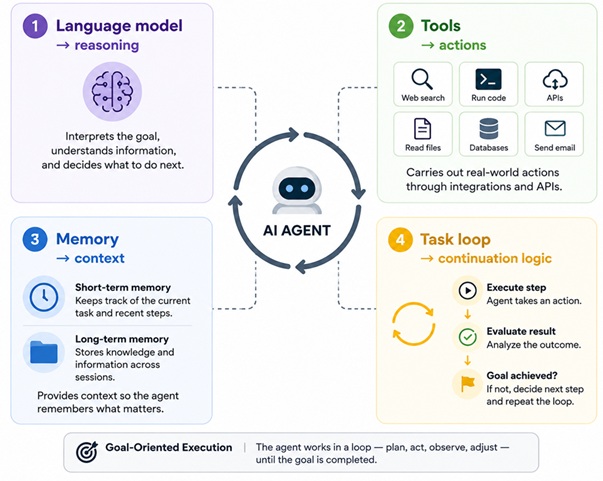

An AI agent usually has four components working together:

A language model — the reasoning part. It reads the goal, decides what to do next, and interprets results.

Tools — the action part. These are integrations the agent can use: search, code execution, API calls, file systems, databases.

Memory — context across steps. Short-term memory keeps track of what's happened in the current task. Some agents also have longer-term memory across sessions.

A task loop — the logic that keeps it going. After each step, it asks: is the goal done? If not, what's next?

None of these parts are magic on their own. But together they produce behaviour that looks very different from a standard AI assistant.

This isn't a concept that's years away. Companies are already using it.

Software development: Agents read bug reports, find the problem in the code, write a fix, run the tests, and flag the result for a human to approve.

Customer support: Agents look up accounts, check order status, process refunds, and update records — handling the whole interaction without escalating.

Data workflows: Agents pull from multiple sources, clean the data, run analysis, and produce a report — on a schedule, automatically.

Research: Agents search across sources, extract what's relevant, and compile a usable summary.

HR and operations: Agents screen applications, send responses, schedule interviews, and keep tracking systems updated.

These aren't demos. Businesses running lean teams are using agents to handle volume that would otherwise require more people.

The honest answer is: repetitive, structured work that follows a predictable pattern.

Gather information. Check a condition. Take an action. Move to the next step. That describes a huge percentage of what most business operations actually involve — and that's exactly what agents handle well.

Beyond the volume, it reduces errors from manual handoffs. When a task moves between people or departments, things get lost, delayed, or done inconsistently. An agent that owns the whole sequence eliminates most of that.

For smaller teams especially, this matters. A 10-person company can run workflows that previously needed a larger headcount.

There are real ones. Anyone deploying this in a live environment needs to know them.

Errors can compound. Because it works through multiple steps without checking in, a wrong decision early can produce a wrong result at the end. The problem isn't always obvious until you see the output.

It needs a well-defined goal. Vague instructions produce vague or incorrect results. The clearer the task, the better the agent performs.

It doesn't handle novelty well. Situations that fall outside what it was designed for often produce unreliable results. It's not adaptable in the way a person is.

Access and permissions matter a lot. An agent connected to email, databases, and external APIs has real reach. Poor configuration can lead to unintended actions — and that's hard to reverse.

These problems are being actively worked on. They're not reasons to avoid the technology, but they are reasons to be deliberate about how it's set up.

What is the difference between agentic AI and a chatbot?

A chatbot responds to individual messages. Agentic AI carries out multi-step tasks using tools and independent decision-making. A chatbot answers questions. An agent takes actions.

Does agentic AI need human supervision?

It depends on the task. Most deployments include checkpoints where a person approves certain actions before the agent continues. Fully autonomous agents exist but are mostly used for well-defined, low-risk workflows where the stakes of a wrong action are limited.

Is agentic AI the same as AGI?

No. AGI refers to a hypothetical system with broad human-level intelligence. Agentic AI is task-specific. It works well in defined contexts but doesn't generalise the way a person does. The two terms are not interchangeable.

Can agentic AI replace employees?

It can replace specific tasks — particularly ones that are repetitive and structured. It's much less suited to work requiring judgment, creativity, or interpersonal communication. Most real deployments augment existing teams rather than replace them outright.

Which industries are most ready for agentic AI?

Software development, finance, logistics, customer service, and HR have the most active real-world deployments today. These industries share structured data, clear processes, and high volumes of repetitive work — which is where agents perform best.

How should a business start with agentic AI?

Pick one workflow that is high-volume, low-risk, and follows a predictable pattern. Start there, measure results, fix what doesn't work, and expand from that foundation. Starting broad rarely goes well.

Is agentic AI safe to use with sensitive business data?

Only if the system is configured carefully. Agents with access to sensitive data need strict permission controls, audit logs, and clear data policies. Off-the-shelf tools are not automatically safe for sensitive environments — that has to be built in deliberately.

Agentic AI is a real change in how software handles work — moving from tools that respond to tools that act. It's not suited to everything, and it's not without risk. But for structured, multi-step business processes, it's already proving useful for teams that have been deliberate about where and how they use it.

We are here to assist with your questions. Write us a message, and we will get back to you shortly.